The graphics processing unit has undergone one of the most dramatic evolutionary journeys in computing history, transforming from simple 2D accelerators to sophisticated parallel computing powerhouses that drive everything from photorealistic gaming to artificial intelligence breakthroughs. Over the past three decades, twelve distinct generations of graphics cards have emerged, each promising revolutionary performance improvements that have consistently pushed the boundaries of what's possible in visual computing. This comprehensive analysis examines the performance jumps delivered by each generation, from the pioneering 3D accelerators of the mid-1990s to today's AI-enhanced rendering monsters. By meticulously comparing architectural innovations, manufacturing process improvements, and real-world performance gains, we'll uncover which generations delivered the most significant leaps forward and why certain transitions marked pivotal moments in graphics technology. Understanding these generational improvements isn't just about appreciating technological progress—it's about recognizing the patterns that have driven the industry forward and predicting where future innovations might take us next.

1. The Foundation Era - Early 3D Acceleration (1996-1999)

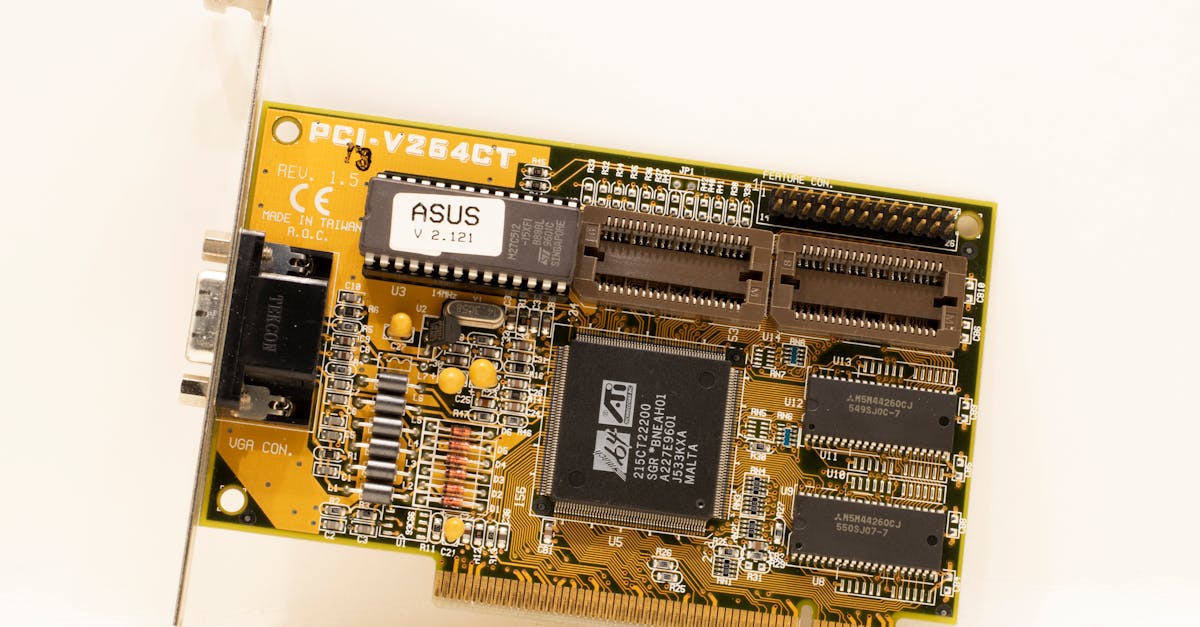

The late 1990s marked the birth of consumer 3D graphics acceleration, with pioneering cards like the 3dfx Voodoo Graphics and NVIDIA RIVA 128 establishing the foundation for all future GPU development. This first generation delivered an astronomical performance jump over software-based 3D rendering, often providing 10-20x improvements in frame rates for games like Quake and Tomb Raider. The Voodoo Graphics, released in 1996, could render textured polygons at speeds that made real-time 3D gaming truly viable for mainstream consumers, transforming gaming from sprite-based 2D experiences to immersive 3D worlds. However, these early accelerators were limited in scope, typically handling only 3D rendering while requiring a separate 2D graphics card for desktop operations. The performance gains were revolutionary but came with significant limitations—low resolutions, basic texture filtering, and primitive lighting models. Despite these constraints, this generation established the fundamental architecture of dedicated graphics processing that would evolve into modern GPUs. The jump from software rendering to hardware acceleration represented perhaps the most dramatic single-generation improvement in graphics performance history, laying the groundwork for the explosive growth in visual computing that would follow.

2. The Unification Generation - Integrated 2D/3D Solutions (1999-2001)

The second generation of graphics cards addressed the primary limitation of their predecessors by integrating 2D and 3D functionality into single-chip solutions, exemplified by cards like the NVIDIA GeForce 256 and ATI Radeon 7500. This generation delivered a more modest but crucial performance improvement of approximately 2-3x over first-generation cards while dramatically improving convenience and compatibility. The GeForce 256, marketed as the world's first GPU, introduced hardware transform and lighting (T&L), offloading geometric calculations from the CPU and providing smoother performance in complex 3D scenes. This architectural advancement meant that games could feature more detailed character models and environments without proportionally impacting frame rates. The integration of 2D and 3D functions eliminated the need for dual graphics cards, reducing system complexity and cost while improving stability. Performance improvements were particularly noticeable in CPU-limited scenarios, where the hardware T&L could provide 50-100% frame rate increases in geometry-heavy games. While the raw polygon throughput improvements were incremental compared to the previous generation's revolutionary jump, the architectural refinements and feature additions made this generation essential for the mainstream adoption of 3D gaming. This period established the template for modern GPU design, where performance improvements would increasingly come from architectural efficiency rather than just raw processing power increases.

3. The Shader Revolution - Programmable Pipeline Introduction (2001-2003)

The third generation marked another revolutionary leap with the introduction of programmable shaders, led by the NVIDIA GeForce 3 and later refined by the ATI Radeon 9700 Pro. This generation delivered performance improvements of 3-4x in shader-heavy applications while fundamentally changing how graphics were rendered. The GeForce 3's vertex shaders allowed developers to create more realistic lighting effects, detailed character animations, and complex geometric transformations that were previously impossible or prohibitively expensive. The Radeon 9700 Pro later introduced DirectX 9-class pixel shaders, enabling per-pixel lighting calculations that dramatically improved visual quality in games like Doom 3 and Half-Life 2. The performance jump wasn't just about raw speed—it was about enabling entirely new categories of visual effects that had been relegated to pre-rendered cinematics. Games could now feature realistic water reflections, dynamic shadows, and complex material properties in real-time. The architectural shift from fixed-function pipelines to programmable shaders represented a fundamental change in GPU design philosophy, moving from specialized hardware units to more flexible, general-purpose processing elements. This generation established the foundation for modern shader-based rendering and demonstrated that future graphics improvements would increasingly depend on software innovation rather than just hardware brute force.

4. The DirectX 9 Maturation - Shader Model 2.0 and Beyond (2003-2005)

The fourth generation refined programmable shaders with longer, more complex programs and improved precision, exemplified by cards like the NVIDIA GeForce FX 5900 and ATI Radeon X800. While the raw performance improvement was more modest at 2-2.5x, this generation delivered significant advances in visual quality and shader complexity. The introduction of Shader Model 2.0 allowed for much longer pixel shader programs, enabling sophisticated effects like realistic skin rendering, complex material shaders, and advanced lighting models. The ATI Radeon X800 particularly excelled in this area, providing superior performance in shader-heavy games while maintaining excellent image quality. This generation also saw the maturation of anti-aliasing techniques, with cards finally having enough raw power to enable 4x MSAA at reasonable frame rates in most games. The performance improvements were particularly noticeable in games that heavily utilized the new shader capabilities, with titles like Far Cry and Doom 3 showing dramatic visual improvements over previous-generation hardware. Manufacturing process improvements to 130nm and 110nm nodes contributed to better power efficiency and higher clock speeds. While not as revolutionary as the introduction of shaders themselves, this generation proved that incremental improvements in shader capability could deliver substantial real-world benefits, establishing a pattern of evolutionary rather than revolutionary progress that would characterize many future generations.

5. The High-Definition Era - Shader Model 3.0 and HDR (2005-2006)

The fifth generation introduced Shader Model 3.0 and high dynamic range (HDR) rendering, led by the NVIDIA GeForce 7800 GTX and ATI Radeon X1900. This generation delivered approximately 2.5-3x performance improvements while enabling new visual technologies that would become standard in modern gaming. The GeForce 7800 GTX's support for longer, more complex shaders allowed developers to implement sophisticated lighting models and material effects that closely approximated real-world physics. HDR rendering capability meant games could finally display the full range of brightness levels found in natural scenes, from deep shadows to brilliant highlights, creating more realistic and immersive environments. The architectural improvements included better memory bandwidth utilization and more efficient shader execution, resulting in smoother performance even as visual complexity increased. Games like Oblivion and F.E.A.R. showcased the generation's capabilities with expansive outdoor environments and complex indoor lighting that maintained playable frame rates at 1280x1024 resolution. The introduction of SLI and CrossFire multi-GPU technologies during this period also demonstrated the industry's recognition that single-card performance improvements were becoming more challenging to achieve. Manufacturing advances to 90nm processes enabled higher transistor counts and improved power efficiency, setting the stage for the more dramatic architectural changes that would follow in subsequent generations.

6. The Unified Architecture Revolution - DirectX 10 and Geometry Shaders (2006-2008)

The sixth generation represented one of the most significant architectural overhauls in GPU history, with NVIDIA's GeForce 8800 GTX introducing unified shader architecture and DirectX 10 support. This generation delivered performance improvements of 3-4x in DirectX 10 applications while fundamentally changing GPU design principles. The unified architecture meant that vertex, pixel, and the newly introduced geometry shaders all ran on the same processing units, dramatically improving efficiency and enabling better load balancing across different types of graphics workloads. The GeForce 8800 GTX's 128 unified shader processors could dynamically allocate resources based on application needs, providing superior performance across a wider range of scenarios compared to previous fixed-function designs. Geometry shaders enabled real-time tessellation and complex geometric effects like fur, grass, and particle systems that were previously impossible at interactive frame rates. The architectural changes also laid the groundwork for general-purpose GPU computing (GPGPU), with NVIDIA introducing CUDA during this period. Games like Crysis demonstrated the generation's capabilities with unprecedented visual fidelity, though they also highlighted the substantial performance requirements of DirectX 10 features. The transition to 80nm and later 65nm manufacturing processes enabled the complex unified architectures while maintaining reasonable power consumption, though thermal design became increasingly important for high-end cards.

7. The Refinement Phase - DirectX 10.1 and Efficiency Improvements (2008-2009)

The seventh generation focused on refining the unified architecture while improving power efficiency and manufacturing yields, exemplified by the NVIDIA GeForce GTX 280 and ATI Radeon HD 4870. Performance improvements were more modest at 1.5-2x, but this generation delivered significant advances in performance per watt and manufacturing cost reduction. The GTX 280's larger die size and higher shader count provided brute-force performance improvements, while the HD 4870 demonstrated that architectural efficiency could deliver competitive performance with smaller, more cost-effective designs. This generation marked ATI's return to high-end GPU competitiveness after several years of NVIDIA dominance, with the HD 4870 often matching or exceeding GTX 280 performance while consuming less power and costing significantly less. The introduction of GDDR5 memory provided substantial bandwidth improvements that enabled higher resolutions and more complex shading effects without proportional performance penalties. DirectX 10.1 support added incremental features like improved anti-aliasing and more flexible resource management, though adoption was limited due to the small installed base of Windows Vista systems. The generation also saw the maturation of multi-GPU scaling, with both SLI and CrossFire delivering more consistent performance improvements across a broader range of applications. Manufacturing improvements to 55nm processes enabled higher clock speeds and better yields, making high-performance graphics more accessible to mainstream consumers.

8. The DirectX 11 Revolution - Tessellation and Compute Shaders (2009-2011)

The eighth generation introduced DirectX 11 with hardware tessellation and compute shaders, led by the ATI Radeon HD 5870 and later challenged by the NVIDIA GeForce GTX 480. This generation delivered 2-3x performance improvements in tessellation-heavy applications while introducing capabilities that would define the next decade of graphics development. The HD 5870's dedicated tessellation units could dynamically subdivide geometry based on viewing distance and screen space, enabling incredibly detailed surface geometry without the memory overhead of storing high-polygon models. Compute shaders provided a standardized way to perform general-purpose calculations on the GPU, enabling advanced post-processing effects, physics simulations, and even non-graphics applications to benefit from GPU acceleration. The architectural improvements included better memory compression, more efficient rasterization, and improved multi-threading support that enabled better CPU-GPU parallelism. Games like Battlefield 3 and The Witcher 2 showcased tessellation's ability to add fine surface detail to characters and environments, creating more realistic and immersive visual experiences. The generation also marked a significant improvement in power efficiency, with the HD 5870 delivering substantially better performance per watt than previous high-end cards. Manufacturing advances to 40nm processes enabled the complex tessellation hardware while maintaining reasonable die sizes and costs, though yield issues initially limited availability of the most powerful cards.

9. The Architecture Wars - Fermi vs. VLIW5 Optimization (2010-2012)

The ninth generation was characterized by competing architectural philosophies, with NVIDIA's Fermi architecture (GTX 580) emphasizing compute performance and ATI's VLIW5 design (HD 6970) focusing on graphics optimization. Performance improvements were relatively modest at 1.5-2x, but this generation established important precedents for future GPU development. The GTX 580's Fermi architecture featured improved shader efficiency, better branch handling, and enhanced double-precision floating-point performance that benefited both graphics and compute applications. The HD 6970's VLIW5 architecture maximized graphics performance through highly optimized shader units specifically designed for typical graphics workloads, often delivering superior frame rates in traditional gaming scenarios. This generation highlighted the growing importance of software optimization, with games increasingly showing significant performance differences based on how well they utilized each architecture's strengths. The introduction of advanced anti-aliasing techniques like FXAA and improved temporal anti-aliasing provided better image quality with lower performance penalties than traditional MSAA. Power efficiency improvements were incremental but important, with both architectures delivering better performance per watt than their predecessors through architectural refinements rather than process improvements. The generation also saw the maturation of GPU computing applications, with CUDA and OpenCL enabling graphics cards to accelerate everything from video encoding to scientific simulations, expanding the market beyond traditional gaming applications.

10. The 28nm Leap - Kepler and GCN Architecture Introduction (2012-2014)

The tenth generation marked a significant manufacturing node transition to 28nm, enabling the NVIDIA GeForce GTX 680 (Kepler) and AMD Radeon HD 7970 (Graphics Core Next) to deliver 2.5-3x performance improvements while dramatically improving power efficiency. The GTX 680's Kepler architecture introduced GPU Boost technology, which dynamically adjusted clock speeds based on power and thermal headroom, maximizing performance within design constraints. The HD 7970's GCN architecture represented AMD's shift away from VLIW designs toward a more compute-focused approach that balanced graphics and general-purpose processing capabilities. Both architectures benefited enormously from the 28nm process node, which enabled much higher transistor counts and clock speeds while actually reducing power consumption compared to previous generations. The performance improvements were particularly dramatic in high-resolution gaming, with both cards capable of delivering playable frame rates at 2560x1440 resolution in most contemporary games. Advanced features like hardware-accelerated video decoding, improved multi-monitor support, and enhanced tessellation performance made this generation appealing for both gaming and professional applications. The architectural efficiency improvements meant that mid-range cards from this generation often outperformed high-end cards from the previous generation while consuming less power and producing less heat. This generation established the foundation for modern GPU architectures and demonstrated the continued importance of manufacturing process improvements in delivering generational performance gains.

11. The Maxwell Revolution - Efficiency and Feature Integration (2014-2016)

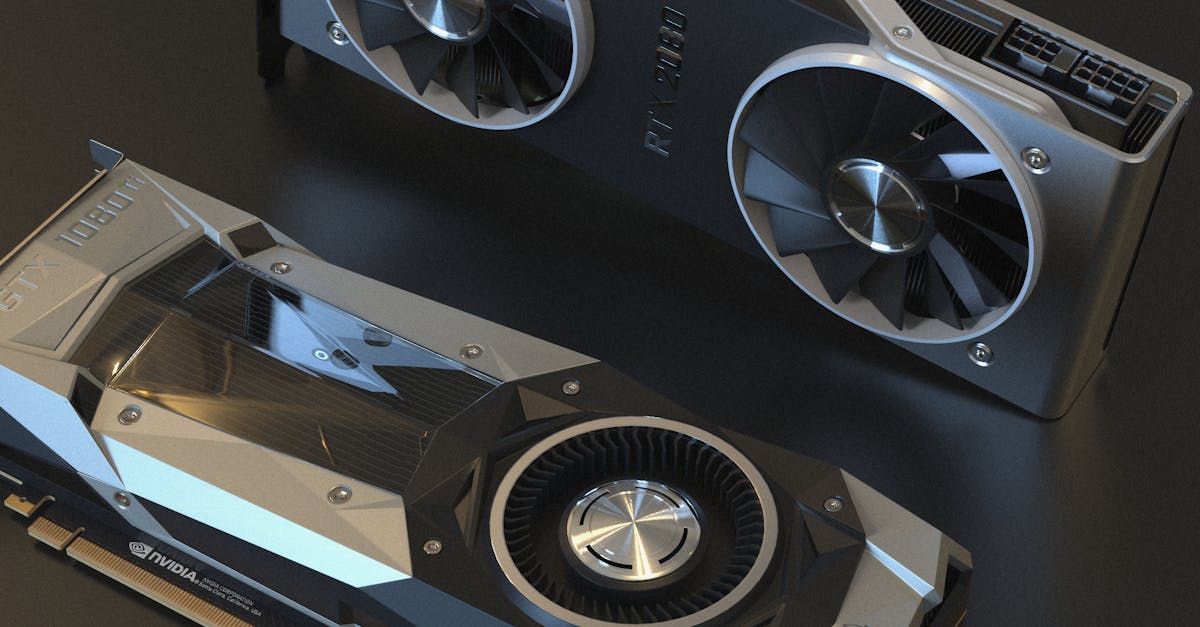

The eleventh generation, dominated by NVIDIA's Maxwell architecture in the GTX 900 series, delivered remarkable 2-3x performance per watt improvements while introducing features that would become essential for modern gaming. The GTX 980's Maxwell architecture represented a complete redesign focused on efficiency, with each shader unit capable of more work per clock cycle and improved memory compression reducing bandwidth requirements. While raw performance improvements were more modest at 1.5-2x, the architectural efficiency gains enabled much more powerful graphics cards within reasonable power envelopes. The introduction of hardware-accelerated H.265 encoding and decoding positioned these cards for the emerging 4K content creation market, while improved NVENC capabilities made GPU-accelerated streaming accessible to mainstream users. Advanced anti-aliasing techniques like MFAA (Multi-Frame Anti-Aliasing) provided better image quality than traditional methods while actually improving performance in many scenarios. The generation also introduced more sophisticated power management, with cards capable of near-zero power consumption during idle periods and rapid transitions between power states during varying workloads. Games like The Witcher 3 and Grand Theft Auto V showcased the generation's ability to deliver consistent performance at 1440p resolution with high detail settings. AMD's competing Radeon R9 390X provided strong competition in raw performance but couldn't match Maxwell's efficiency, highlighting the importance of architectural optimization over pure computational throughput in modern GPU design.

12. The 4K and VR Era - Pascal and Polaris Optimization (2016-2018)

The twelfth generation brought the transition to 16nm/14nm manufacturing processes with NVIDIA's Pascal (GTX 1080) and AMD's Polaris (RX 480) architectures, delivering 2-2.5x performance improvements while enabling mainstream 4K gaming and virtual reality experiences. The GTX 1080's Pascal architecture combined the efficiency lessons of Maxwell with substantially higher clock speeds and memory bandwidth, enabling consistent 60fps gaming at 4K resolution in many titles. The architectural improvements included better instruction scheduling, improved memory compression, and enhanced geometry processing that reduced CPU bottlenecks in complex scenes. VR-specific optimizations like simultaneous multi-projection and VR SLI provided the low-latency, high-frame-rate rendering essential for comfortable virtual reality experiences. AMD's Polaris architecture focused on bringing high-end features to mainstream price points, with the RX 480 delivering GTX 980-level performance at a much lower cost and power consumption. The generation also saw significant improvements in display connectivity, with native support for HDR displays, higher refresh rates, and multiple 4K monitors becoming standard features. Advanced features like hardware-accelerated ray tracing began appearing in professional cards, foreshadowing the major architectural changes that would come in the next generation. The manufacturing process improvements enabled much higher transistor densities and clock speeds while maintaining reasonable power consumption, though thermal design became increasingly critical for extracting maximum performance from these powerful chips.

13. The Modern Era and Future Implications - Architectural Evolution Patterns